The Pattern Recognition:

If you’ve been in cybersecurity long enough, you’ve seen this movie before.

- Encryption backdoors.

- Government surveillance mandates.

- Content moderation battles.

- Section 230 fights.

- Export controls on cryptography.

Every time transformative technology emerges, Washington asks the same question:

“How do we control it?”

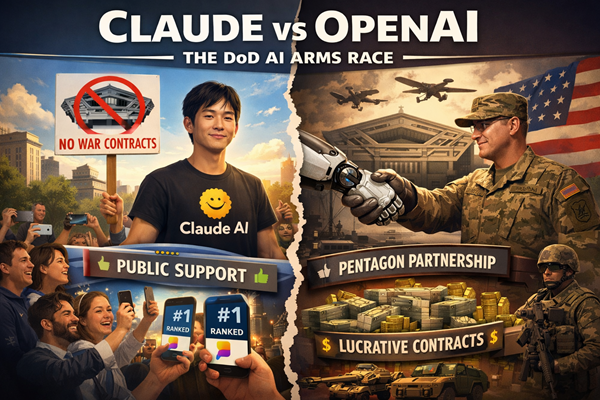

The Split

Here’s the strategic divide: OpenAI has embraced Pentagon access. Unrestricted military AI partnerships. Positioning themselves to capture a defense AI market projected at $18 billion by 2030.

That’s not pocket change. That’s geopolitical infrastructure money.

Meanwhile —

Anthropic said no to:

- No to removing AI safeguards for autonomous weapons & surveillance systems

Dario Amodei refused Pentagon strike-related contracts. (strike operations — meaning kinetic actions such as airstrikes, missile strikes, drone operations, or precision targeting missions.)

Anthropic maintained strict usage policies against military applications.

And the response?

- Pressure.

- Prohibition orders. (no allowed to bid on certain contracts)

And then — something unexpected. – Claude surged to #1 in the App Store.

- The market rewarded the refusal.

- That’s not a coincidence. – That’s signal.

The Enforcement Paradox

Now here’s where it gets interesting.

Reports indicate Claude was used during U.S.–Iran military operations — despite explicit prohibitions.

Let that sink in.

Anthropic refused cooperation.

The military used it anyway.

Why? Because large language models are not traditional weapons systems.

They’re distributed cognition platforms.

- You can restrict contracts.

- You can refuse enterprise licenses.

But you cannot stop a military analyst from:

- Opening a browser

- Paying $20 a month

- And querying the system

This exposes something deeper:

AI governance lives at the application layer.

Not inside the model weights.

Prompt filtering.

Policy layers.

Usage agreements.

All soft controls.

There is no cryptographic “military off switch” embedded in the weights.

Once a model is public — enforcement becomes aspirational.

That is the paradox ethical AI companies now face.

You can refuse to build the missile.

You cannot stop someone from asking your system how missiles work.

The Two-Tier AI Market

So here’s the strategic question: Are we watching the birth of a two-tier AI ecosystem?

Tier One:

Government-aligned AI companies.

Defense-integrated.

National security embedded.

Revenue secure.

Tier Two:

Civilian-trusted AI platforms.

Ethics-forward.

Global consumer adoption.

Brand-driven growth.

This isn’t just a business strategy split. – It’s an identity split.

And historically, identity splits create long-term structural divergence.

Just look at:

- Huawei vs Western telecom.

- TikTok vs U.S. regulatory frameworks.

- NSA encryption standards vs open-source crypto.

We’ve seen parallel ecosystems form before.

AI may be next.

The Public Signal

Claude hitting #1 in the App Store is not trivial.

It suggests something important: Consumers are paying attention.

It could mean if the US government uses Claude then other governments will too.

Ethics — once dismissed as a PR exercise — may now drive market valuation.

Anthropic may lose billions in defense contracts.

But they may gain something harder to measure: Civilian trust capital.

And trust capital compounds.

Especially in a world where AI will increasingly mediate:

- Communication

- Research

- Education

- Decision support

- Even belief formation

The Broader Tension

This controversy isn’t isolated.

It connects directly to broader tech-industry tensions:

- Should cloud providers serve surveillance regimes?

- Should cybersecurity firms sell zero-days to governments?

- Should social platforms comply with censorship demands?

- Should encryption be weakened for law enforcement?

AI just magnifies the stakes.

Because AI is not a single-use tool.

It’s cognitive leverage.

And whoever integrates AI deepest into military planning – gains asymmetric advantage.

That is why the pressure exists.

Strategic Implications

For investors:

You now have to price ethics risk.

For enterprises:

You must consider supply chain alignment — civilian or defense embedded?

For governments:

You cannot rely on voluntary compliance from public AI systems.

For the rest of us:

We are witnessing the normalization of AI as national infrastructure.

Not software. Infrastructure.

The Bigger Question

Here’s the one I want you thinking about tonight:

If AI models cannot technically prevent military use once public…

Is “refusal” symbolic?

Or structural?

And if symbolic —

Does symbolism still matter?

Because markets just told us something.

Claude refused.

The public rewarded them.

That’s a signal.

But governments have deeper pockets than app store rankings.

So the next few years will determine which incentive structure wins:

Defense contracts Or Distributed consumer trust.

Final Reflection

Every technological revolution eventually faces a moment where it must choose:

Alignment with power Or Alignment with principle.

Sometimes those align. Often they don’t.

Claude and OpenAI may represent the first visible fork in the road for AI governance.

And forks, historically, shape entire civilizations.

This isn’t just a model war.

It’s the beginning of AI geopolitical identity formation.

And we’re watching it happen in real time.

Stay sharp.